Achieving generalizable manipulation is the north star for robotics learning, and while we’ve in the past seen incredible results on specific tasks using fine-tuned VLAs, this north star has remained elusive.

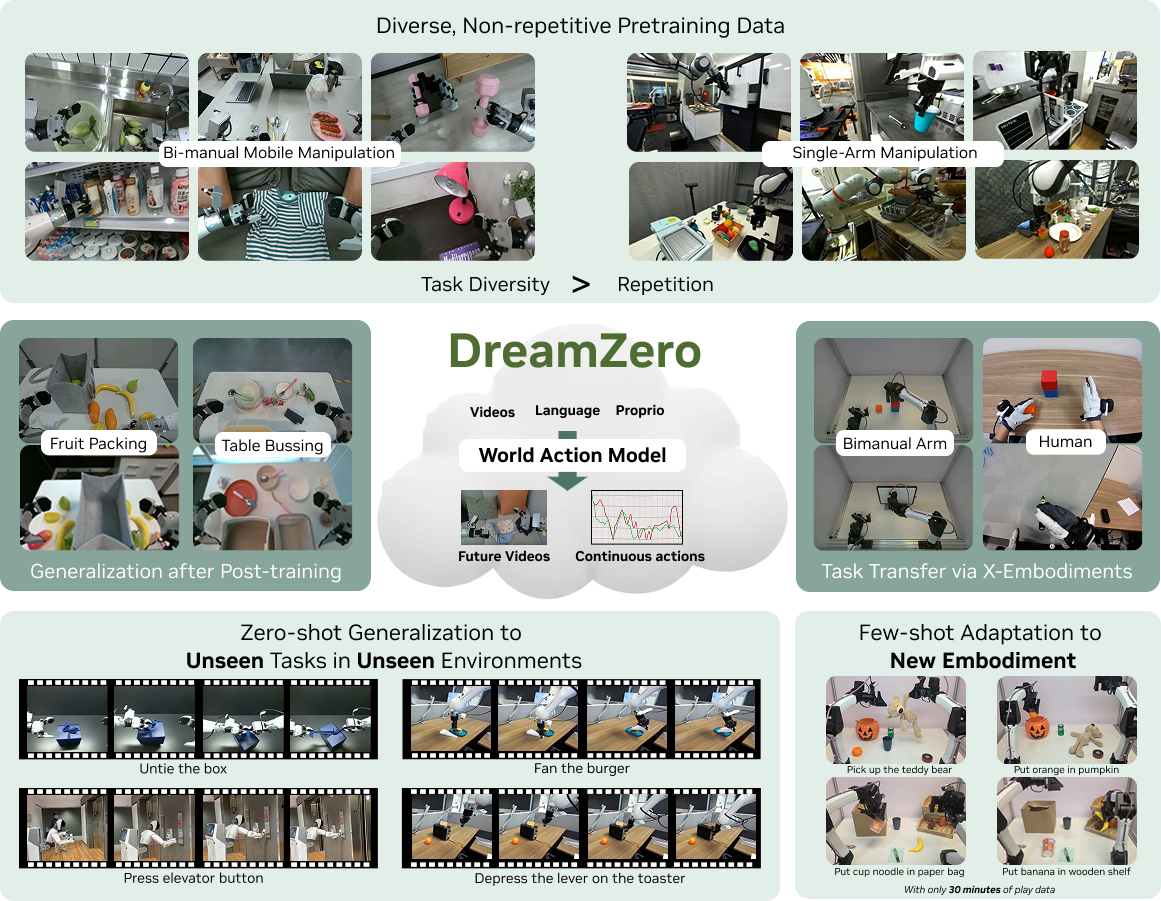

Perhaps what is needed is a different approach. DreamZero proposes World Action models (WAMs), which jointly model both action and video in order to achieve state-of-the-art performance on benchmarks like MolmoSpaces and RoboArena.

Seonghyeon Ye of NVIDIA Robotics joins us to talk about building a 14B parameter autoregressive diffusion model which achieves state-of-the-art generalization on real world tasks and on the best available benchmarks.

Watch episode #68 of RoboPapers, with Michael Cho and Chris Paxton, now!

Abstract:

State-of-the-art Vision-Language-Action (VLA) models excel at semantic generalization but struggle to generalize to unseen physical motions in novel environments. We introduce DreamZero, a World Action Model (WAM) built upon a pretrained video diffusion backbone. Unlike VLAs, WAMs learn physical dynamics by predicting future world states and actions, using video as a dense representation of how the world evolves. By jointly modeling video and action, DreamZero learns diverse skills effectively from heterogeneous robot data without relying on repetitive demonstrations. This results in over 2x improvement in generalization to new tasks and environments compared to state-of-the-art VLAs in real robot experiments. Crucially, through model and system optimizations, we enable a 14B autoregressive video diffusion model to perform real-time closed-loop control at 7Hz. Finally, we demonstrate two forms of cross-embodiment transfer: video-only demonstrations from other robots or humans yield a relative improvement of over 42% on unseen task performance with just 10-20 minutes of data. More surprisingly, DreamZero enables few-shot embodiment adaptation, transferring to a new embodiment with only 30 minutes of play data while retaining zero-shot generalization.

Learn more:

Project Page: https://dreamzero0.github.io/

ArXiV: https://arxiv.org/abs/2602.15922

Github: https://github.com/dreamzero0/dreamzero

You can also read Chris Paxton’s previous post on DreamZero: